Uncanny Tools: Workshop Series

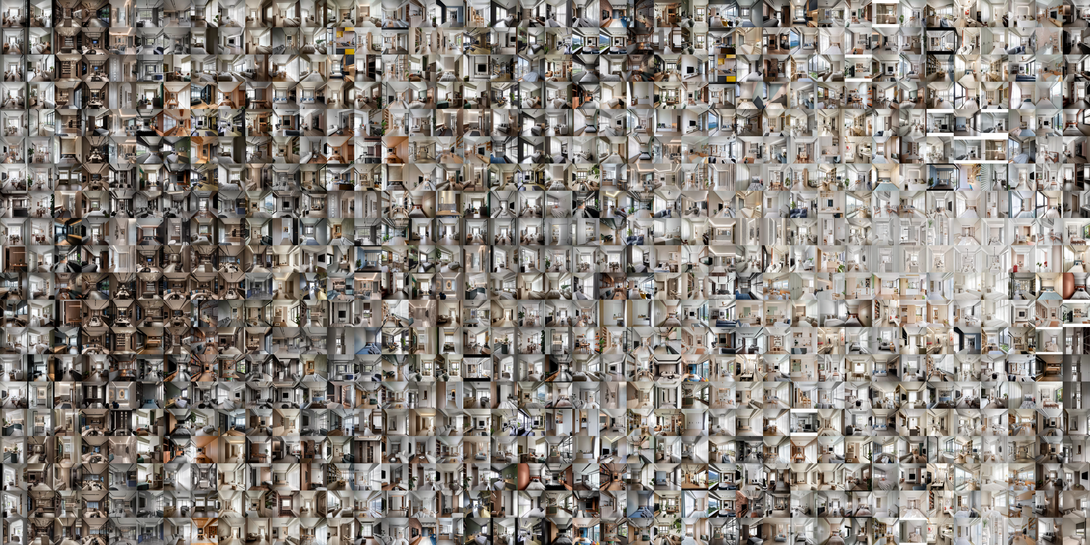

AI Slop.

With Derrick Schultz (Artificial Images), Oscar Keyes (Virginia Commonwealth University), Brian Bird & Ethan Nevidomsky (DeepLocal), and Jimmy Wei-Chun Cheng (Carnegie Mellon-Architecture)

Join us on March 28th and 29th for a weekend of four workshops that explore and build “Uncanny Tools”.

This experimental workshop series that approaches AI as a strange instrument poised to replace familiar tools and practices. Together we'll explore alternative practices and creative reinterpretations that bring us into conversation with both the strange and familiar qualities of AI tools.Overall Schedule

The program is curated by curated by Daragh Byrne (Carnegie Mellon Architecture), Marti Louw (HCII + IDeATe), Nica Ross (STUDIO for Creative Inquiry + School of Drama) and Tuliza Sindi (Carnegie Mellon Architecture)

Uncanny Tools is supported by the National Endowment for the Arts Research Grants in the Arts Program (NEA) and will run in 2026 and 2027 . The NEA funding supports a research project that will explore how learners in the arts understand and relate to AI in creative work.

Apply for Workshops: All workshops are free and open to the public. However, the workshops are limited to a small number of attendees so applications are required. The application form will close on Wednesday March 25. First round notifications will be by Friday March 20.

apply to participate in a workshop

Workshop Attendees Schedule

Saturday, March 28, 2026

Hands-on Workshops: Four workshops led by Derrick Schultz, Oscar Keyes, Brian Bird & Ethan Nevidomsky, and Jimmy Wei-Chun Cheng. Registration required. Lunch and materials provided.

Sunday, March 29, 2026

12pm–4pm: Open Work and Showcase. The showcase is open to all and will share the outcomes of the four workshops.

Public Events

Sunday March 29, 2026

3pm-4pm: Uncanny Tools Showcase, College of Fine Arts Room 214, with Derrick Schultz, Oscar Keyes, Brian Bird & Ethan Nevidomsky, Jimmy Wei-Chun Cheng, and workshop participants.

If you can’t make it to the workshops or the lectures, you can still join us on Sunday between 3pm and 4pm in CFA 214 to hear about the outcomes of each workshop. Each group will share a short overview of their workshop and the outcomes they arrived at.

Workshop Descriptions

Dadaist Filmmaking: Re-Editing the Readymade Film

This workshop explores filmmaking as algorithmic assembly, where hyperlogical and absurdist rules replace traditional narrative structure. You'll collaborate to build a shared corpus of video sourced from archives, screen recordings, AI-generated footage, and whatever else you find or make. These clips are then tagged using various AI tools and algorithms: content descriptions, color palettes, audio transcripts, visual embeddings. The metadata is our editing vocabulary. We will then resequence clips according to constraints that ignore traditional continuity. Match shots by dominant color. Sequence by audio similarity. Let visual embeddings determine what comes next. These non-narrative sequences reveal new structures and ideas. You'll leave with Dadaist Filmmaking with a number of short films and new approaches to apply to your own practice. No previous filmmaking experience is required.

Workshop Lead

Derrick Schultz is a post-AI artist, filmmaker, and educator. Utilizing machine learning and other computational techniques, his work explores generative abstraction and algorithmic filmmaking.

Blooming from noise: Digging into the roots of AI-generated images

In an era of "black box" generative AI tools, concerns about ethical uses of AI are not just valid critiques but often a necessary pedagogical stance. When every tool feels like it has the potential to do irreparable harm, it can be difficult to imagine classroom activities that meaningfully address text-to-image generation, especially with regards to environmental sustainability and exploitative data training practices. This workshop introduces and uses the Common-Canvas model, built on open-source, Creative Commons materials. Additionally, participants will co-construct a small scale, low energy custom model built on locally sourced images of flowers. During downtime, while the model is training, we will have open discussion aimed at demystifying the underlying mechanics of diffusion models and imagining its implications in the classroom. We will cover a range of techniques and tools that can be applied for image verification and image generation purposes.

Workshop Lead

Oscar Keyes, Ph.D. (he/him/his) is the Multimedia Teaching & Learning Librarian at Virginia Commonwealth University in Richmond, VA. His research investigates the emergence of cameras in education and their parallels with emerging technologies today.

Slopera: AI Slop Operas

Participants design AI characters by writing system prompts loaded with contradictions and built-in failure modes — a detective afraid of clues, a diplomat from a country that doesn't exist, a haunted toaster with romantic ambitions. Groups of 3–4 then throw their characters into a shared scenario (a hostage negotiation, a dinner party, a courtroom trial) where a director agent orchestrates who speaks, when to escalate, and when to pull the rug out. The whole thing gets rendered as a short audio drama — mismatched voices and all — powered by a cluster of Raspberry Pis.

Workshop Leads

Brian Bird is a Creative Technologist and Tech Director at DeepLocal specializing in Immersive Media, with over 20 years of experience working across technology and creative roles.

Ethan Nevidomsky is a creative technologist at Deeplocal with a specialty in prototyping and real-time audio-visual experiences.

Image Sabotage: Unfolding the Mediated Reality

Many contemporary AI models are built to predict what is most probable, optimizing for efficiency and accuracy; art and design, by contrast, explore what is possible, operating through ambiguity, abstraction, and interpretation. Trained on dominant patterns across vast internet datasets, these models raise an essential question: which voices are excluded from this shared language? Bias in AI is therefore not only an ethical concern but a representational one, shaping what can be seen, described, and imagined. This workshop investigates how AI might be repurposed as a creative medium to reveal alternative realities and surface marginalized perspectives. Participants treat AI as a medium rather than a neutral tool, constructing their own situated viewpoint from a shared dataset of domestic spaces. Through customized search and image-generation workflows, they create a composite media projection that reveals how AI organizes domestic reality—and where alternative narratives begin to emerge.

Workshop Lead

Jimmy Wei-Chun Cheng (Carnegie Mellon-Architecture) is a designer, researcher, and educator working at the intersection of architecture, artificial intelligence, and media theory. He is currently a faculty member in the School of Architecture at Carnegie Mellon University and a Lead Organizer of the AI Architecture Symposium, Playing Models.

Speakers & Workshop Leads

-

Derrick Schultz is a post-AI artist, filmmaker, and educator. Utilizing machine learning and other computational techniques, his work explores generative abstraction and algorithmic filmmaking. He has produced work for The New York Times, The New Yorker, and more. Derrick was the Lead Creative Technologist on Ho Tzu Nyen’s Phantoms of Endless Day, an endlessly shifting generative AI film re-envisioning the artist’s previously incomplete film. He is a product lead at Titles, helping artists train AI models on their work, maintaining attribution to their original works while allowing a community of creators to explore the artist’s unique style.

-

Oscar Keyes, Ph.D. (he/him/his) is the Multimedia Teaching & Learning Librarian at Virginia Commonwealth University in Richmond, VA. His research investigates the emergence of cameras in education and their parallels with emerging technologies today. His pedagogical approach considers how social issues can be critically scaffolded into technical instruction for various digital tools, such as image-editing software, game engines, and generative artificial intelligence. He has taught (new and old) media arts in various spaces, including higher education, K-12 schools, summer camps, community-based arts organizations, and detention centers. When he’s not busy teaching, Keyes still makes movies with his friends.

-

Brian Bird is a Creative Technologist and Tech Director specializing in Immersive Media, with over 20 years of experience working across technology and creative roles.

His work spans the full spectrum of experiential production — from robotic installations and broadcast integrations to particle simulations, XR experiences, and AI-driven interactive work, deployed at venues and live events across the US and internationally.

Today, Brian is a Creative Tech Director at Deeplocal in Pittsburgh, where he builds interactive installations and immersive experiences for clients including Google, McDonald's, and P&G — with a growing focus on AI-driven work that invites audiences to generate and contribute their own content.

-

Ethan Nevidomsky is a creative technologist at Deeplocal with a specialty in prototyping and real-time audio-visual experiences. They graduated from MIT with a double major in computer science and comparative media studies and primarily work in TouchDesigner. They also build video effects and perform at events around Pittsburgh under the name otherground.

-

Jimmy Wei-Chun Cheng is a designer, researcher, and educator working at the intersection of architecture, artificial intelligence, and media theory. He is currently a faculty member in the School of Architecture at Carnegie Mellon University and a Lead Organizer of the AI Architecture Symposium, Playing Models. Prior to joining CMU, he taught studios, workshops, and seminars at UCL Bartlett, the Boston Architectural College, the Rhode Island School of Design, and the Architectural Association Visiting School Seoul. Cheng’s research investigates how digital media and technology transform the architectural design process, with a particular emphasis on digital representation in the context of Artificial Intelligence. His work delves into how AI affects the meaning of language, imagery, and form, analyzing these impacts through the frameworks of semiotics, media theory, and simulation.